The optimism gap that's shaping AI policy

Introducing the "Existential Hope in Practice" essay series

Welcome to the Existential Hope in Practice essay series, where our fellows write about concrete ideas and practical examples of how to build a great future.

The optimism gap that’s shaping AI policy

By Abi Olvera

In brief:

People are wrong about the world in a specific, consistent way. Across dozens of countries, they overestimate crime, poverty, and unhappiness by wide margins.

AI fits this pattern. Daily use of an AI companion reduced anxiety 30% in active war zones. But the public debate focuses on rare crisis moments.

On issue after issue, the expert picture is more moderate than the public one. Experts broadly agree AI hasn’t caused measurable job losses and doesn’t use much water relative to other industries.

Research on forecasting suggests why. The most confident public voices are, on average, less accurate than ordinary people trained to think in probabilities.

The people who track global progress most carefully tend to be more optimistic. But saying so carries social cost, because it reads as not caring about the problems that remain.

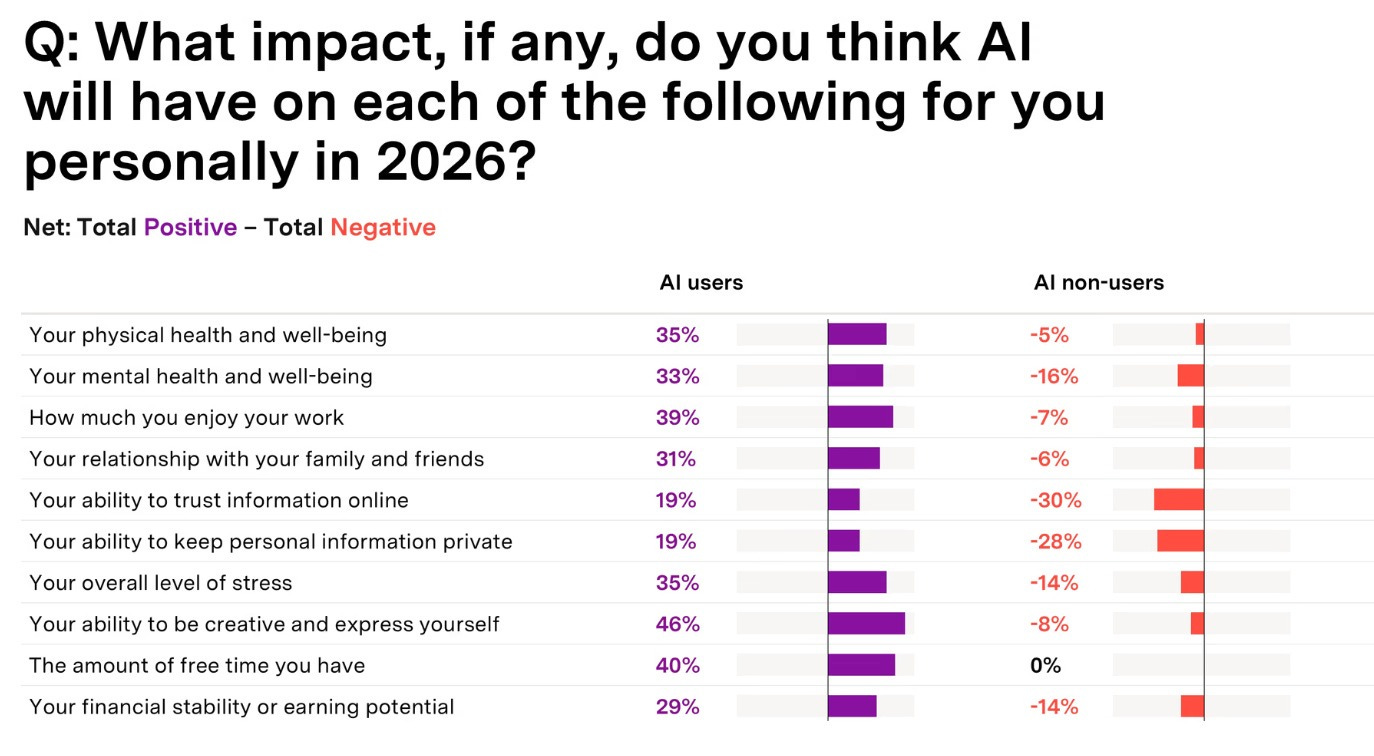

People who actually use AI report feeling curious, happy, and inspired by it. Among users, 46% expect AI to improve their creativity in the coming year, 35% their physical health, 33% their mental health, and 29% their earning potential. Non-users expect AI would stress them out. Users expect the opposite.

Source: NRG Survey of 1,000 People in December 2025 (source)

In a Pew survey, 56% of AI experts expected AI to be mostly positive for society. Only 17% of the general public agreed.

You could tell a simple story about this: people fear what they don’t understand, and once they try AI they feel better. But the gap between personal experience and public perception goes much deeper than AI.

People are pessimistic about almost everything they don’t directly experience

Americans guess that fewer than half their neighbors are happy. Ninety percent actually are. South Koreans guess 25% of their fellow citizens are happy. Again, 90% are. The Ipsos “Perils of Perception” studies have tested this across dozens of countries: people systematically overestimate murder rates, teen pregnancy, immigration, and unemployment. Nearly always in the pessimistic direction.

The Gapminder project found something even stranger. Quiz people on basic global trends — is extreme poverty rising or falling? are more or fewer girls finishing school? — and they score worse than random. Chimpanzees picking answers by chance would outperform educated humans. The information people have points the wrong way.

Why? Partly because news covers what goes wrong and not what goes right, as researcher Hannah Ritchie has documented. But the problem isn’t just missing good news. It’s that we’re building confident, shared mental models of the world that are systematically skewed. We don’t feel uninformed. We feel informed and are wrong.

This distortion is already shaping AI policy

Anxiety and depression affect 21% of Americans. A third of those suffering have never spoken to a healthcare provider about it. For many of them, the realistic alternative to AI-assisted support isn’t a therapist. It’s nothing.

A study of people in active war zones found that daily use of an AI companion reduced anxiety by 30% and depression by 35%. Traditional therapy did better (45% and 50% respectively) but it requires ongoing access to therapists. A smaller study found that 3% of students using Replika, an AI companion app, said it halted their suicidal ideation.

This research is early. Some of it has industry funding. There are serious questions about how to prevent harmful interactions and how to regulate responsibly as these tools develop. Concerns deserve careful answers.

But notice how the public debate is structured: coverage centers on rare, dramatic cases of crisis. The far more common story of someone with anxiety getting a little help because they can’t access or afford anything else doesn’t make headlines. And so the New York State Senate is considering banning AI from giving therapy-like advice, which would cut off access for the people who need it most.

The pattern extends well beyond mental health. Most people don’t know that experts broadly agree AI doesn’t use much water relative to other industries, that misinformation consumption is concentrated in a small minority who actively seek it out, that conspiracy-theory belief hasn’t risen with social media, or that AI hasn’t caused measurable job losses so far. On each of these, the expert picture is more moderate than the public picture. And because the public picture is more alarming, it’s the one that drives legislation.

I spent years as a U.S. diplomat doing exactly this kind of source-checking. That skill is useful outside the government too.

Where to look for context instead of alarm

Advertising-supported media optimizes for attention, and alarming stories capture more of it. When newspapers first reached mass audiences in Victorian England, there was a panic about muggings in London even though crime had actually fallen and was lower than in the rest of the country. Today, most Americans think crime is rising when it isn’t.

But not every information platform works this way. On Reddit, communities like r/AskHistorians are moderated by professional historians who delete low-quality answers. Domain-specific communities like r/microbiology or r/labrats are full of practitioners talking to peers, not competing for clicks from a general audience. If you want to know what biologists actually think about a topic in the news, that’s a better place to look than any newspaper.

Subscription-funded publications (such as niche outlets like STAT News and Wired, as well as independent Substacks) work on a different incentive. Readers pay month after month only if they trust you over time. When I correct a mistake publicly on my Substack instead of doubling down, my readers respect me more for it. That feedback loop doesn’t exist when your revenue comes from advertising. Writers like Matt Yglesias, Dan Williams, and Steve Newman have built large followings this way, treating subjects like media incentives, misinformation, and AI with grounded, evidence-first analysis that wouldn’t survive in an attention economy.

How to think clearly despite the headlines

In 2011, the U.S. intelligence community ran a forecasting tournament to find out who could best predict geopolitical events. A group of ordinary people trained on forecasting best practices and overcoming cognitive biases won the contest. They beat intelligence analysts with access to classified information. The professor-led project to train people, the Good Judgment Project, beat the intelligence community’s own prediction markets by roughly 30%.

What separated these “superforecasters“ from the rest of the competitors was a set of habits anyone can learn. They thought in probabilities instead of certainties: not “AI will take all the jobs” or “AI is fine,” but “there’s maybe a 15% chance of measurable job losses in this sector by this date.” They looked at long-run trends instead of individual anecdotes. They asked what specialists in the relevant field actually thought, not what pundits said. And crucially, they kept score on themselves: when they were wrong, they tried to understand why and adjusted.

The lead professor’s earlier research found something even more striking about the experts who get the most airtime. Pundits who organized their thinking around one big narrative e.g. “technology always destroys jobs” were consistently worse at predicting than people who held many small, uncertain views and updated them as evidence came in. The most confident voices on television are, on average, the least accurate. That goes a long way toward explaining why the public narrative skews so alarming.

On AI specifically, the trends support moderation. Exponential increases in computing power tend to produce only linear improvements in intelligence. Reliability hasn’t improved at the rate many assumed. Many of the most alarming claims from AI labs, such as those about autonomous cyberattacks, haven’t been independently replicated and are viewed skeptically by the cybersecurity community. In almost every alarming headline I’ve investigated, I find missing context that materially changes the story. Superforecasters, for example, estimate that catastrophic AI risk is extremely low in the near term (though both they and domain experts have tended to underestimate how quickly AI improves on benchmarks).

You can start doing the same thing. The next time you see an alarming AI headline, look up the primary source. Ask: compared to what? How large is the effect? What does the relevant expert community actually think? Platforms like Metaculus let you watch forecasters assign probabilities to AI outcomes in real time and track how those numbers shift as new evidence comes in. We’d gain a lot by hearing more from these kinds of thinkers who make specific, verifiable predictions rather than vague warnings.

What we lose when we dismiss the optimists

People assume optimists are naive. The evidence says the opposite. People with more accurate mental models of big global trends tend to be more optimistic, not less. The world has gotten measurably better in many ways over the past few decades: child labor is down globally, extreme poverty has decreased dramatically, and absolute poverty in the U.S. has fallen. The people who track this most carefully see a world that is improving and worth improving further.

Sites like Our World in Data and Gapminder make this easy to see for yourself — and taking Gapminder’s ignorance quizzes is one of the fastest ways to discover how wrong your mental model might be.

The public picture is so consistently more alarming than the expert one. This happens for many reasons but one that I encounter often: saying these things out loud can often lead people to assume you don’t care about the problems that remain, or that you’re against pushing for more progress.

I still find it scary to say this: I’m optimistic about AI. When I tell people, I worry they’ll think I haven’t done my homework. They’ll think I’ve missed all the alarming coverage and must be either careless or captured by the tech industry.

But my optimism comes from doing the research. When a Meta researcher’s AI agent deleted her entire inbox and headlines declared AI had “gone rogue,” I looked up what actually happened. The system’s working memory, the amount of prior conversation it could reference, got too large, and so it summarized earlier instructions, including the instruction not to delete emails. The incident was a memory management failure, not a Terminator scenario.

That same instinct from my years in diplomacy to go deeper, check the source, find what’s missing from the story is what makes me optimistic.

Ironically, it would be much easier to be pessimistic. Nobody questions you for assuming the worst. Being optimistic and specific about why requires doing the homework, and then risking the social cost of seeming insufficiently alarmed.

When we dismiss optimism as naivety or worse, as being against the cause, the people who’ve looked most carefully at the evidence become the least likely to share what they’ve found. That’s a problem we can’t afford when the decisions being made about AI, who gets access, what gets regulated, what gets banned are consequential.

About the author

Abi Olvera is a former U.S. diplomat with over a decade of service, she now focuses on the intersection of governance and technology. Abi Olvera writes Positive Sum, a Substack exploring the systems and institutions that enable abundance and progress, from AI and longevity to housing and everyday innovation. She is also founder of DC Abundance and Research Director at the Golden Gate Institute for AI. Her research explores how institutions can overcome bottlenecks that slow innovation and progress. She serves as the Bulletin of the Atomic Scientists’ AI editorial fellow and on the boards of Rethink Priorities, Institute for AI Policy and Strategy, ML Alignment & Theory Scholars (MATS), and Quantified Uncertainty Research Institute.